Makalu Member Analytics

Introduction

Selecting the right primary entities and building proper relationship is the heart of enterprise application design. And, this is common to both NOSQL and SQL world. Besides this, one important factor, in NOSQL world is to design proper structure of primary entities and queries around them. Unlike that in SQL solutions, in most of the NOSQL solutions the structure of the entity resembles the real world object it represents. It makes the solution more flexible. However, the more the complexity of the object they represent the more the complexity of the data structure and its design and the more the use cases for it.

Background

In healthcare data, the basic records are medical, pharmacy, lab, and eligibility data. The starting conception would be to use these as primary entities and then do as many pre-calculation as possible and as many de-normalization as possible to make the query just to read pre-aggregated data during runtime. However, when we start building relationship and go on adding abstraction, it becomes very clear that they are derived from a primary entity: member. That is, “a member has diagnosis, a member has medical, pharmacy, lab, and eligibility records” makes more direct approach in real world than “a claim has member or a claim belongs to a member”. So, once one has a slight experience in healthcare data, he/she prefers to move to member centric way of design. “Member Analytics” term in Makalu is based on this concept and the whole process of migration to “Member Analytics” is to migrate the design from claim centric or record-centric to member centric way of thought. In the following sections we will describe the flexibility and scalability gain we achieved and the complexities that we had to face while introducing this concept.

Problem

The major hassle in developing scalable solution in big data is finding data distribution. Individual record-centric approach has good data distribution, however, the metrics and all analytics go down to the individual record which suffers from either the entire database being queried for each query or all reports being pre-calculated making the application more rigid. So, we need some middle way where we are flexible enough but at the same time queries do not hit the entire database each time. By limiting the runtime queries up to member level aggregation and doing pre-calculation for all member level metric, we can avoid both of these concerns in Member Analytics. Core business concept behind this is: “Store only primary records and build all reports during runtime”.

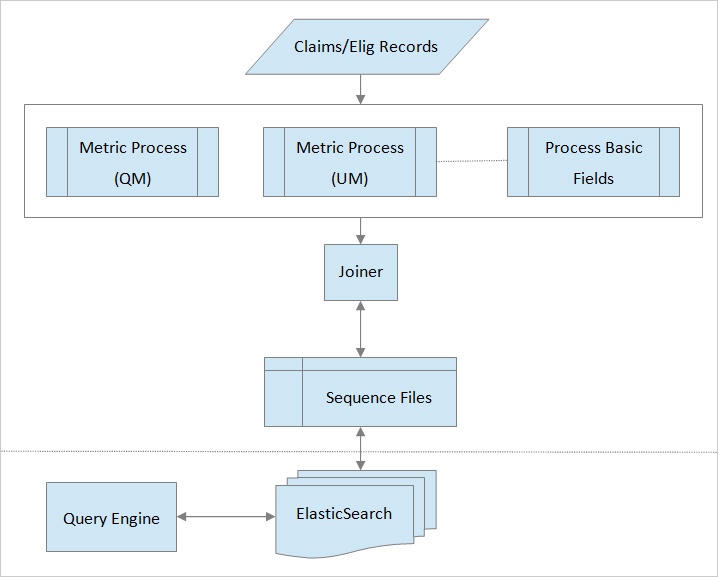

Overall Structure

Storage Design

We add “Member”, a very complex logical data object, to the list of primary records. This simply means, we store only basic transformation of source records and then very basic level metrics for member. Then, we design the metrics structure in a way that the reports are all dynamic queries instead of pre-calculation. Member record structure with just Utilization Metric is like this:

{

"MemberSearch":{

"properties":{

"currentStatus":{"type":"string","index":"not_analyzed"},

"effectiveDate":{"type":"date","format":"dateOptionalTime"},

"eligibleMonths":{"type":"date","format":"dateOptionalTime"},

"groupId":{"type":"string","index":"not_analyzed"},

"groupName":{"type":"string","index":"not_analyzed"},

"lobPlanType":{"type":"string","index":"not_analyzed"},

"medTotalPaidAmount":{"type":"long"},"memberFirstName":{

"type":"multi_field",

"fields":{

"memberFirstName":{"type":"string","index":"not_analyzed"},

"contains":{

"type":"string",

"index_analyzer":"containsAnalyzer",

"search_analyzer":"standardAnalyzer",

"include_in_all":false

}

}

},

"memberId":{

"type":"multi_field",

"fields":{

"memberId":{"type":"string","index":"not_analyzed"},

"contains":{

"type":"string",

"index_analyzer":"containsAnalyzer",

"search_analyzer":"standardAnalyzer",

"include_in_all":false

}

}

}

"um":{"type": "nested","properties":{"serviceMonth":{"type":"date","format":"dateOptionalTime"},"paidMonth":{"type":"date","format":"dateOptionalTime"},"value":{"type":"long"},"paidAmount":{"type":"double"},"allowedAmount":{"type":"double"}}}

}

}

We add more and more metrics to this structure. For a simple example, we add Quality Metric with almost similar structure to Utilization Metric. The structure of individual metric added depends upon the complexity of each metric which in turn depends upon number and complexity of queries it is expected to serve. For example, a simple usage of above metric will be to identify the total number of visits for a client for a specific period of time. Besides that, depending upon the query requirement, the structure of individual group of metrics keep on changing. For example: for Quality Metric, the current structure is like this: populationList_01-2012: [“CAD_1”,”Asthma_2”], numeratorList_01-2012=[“CAD_1”]. To summarize: it stores the list of Quality Metric meeting condition for each month for a member. However, we are in need to change this structure to address the different need for accommodating active/non-active members in the metric.

In gist, the storage data are either the direct records transformation from source or aggregated for a member.

Report Query

Flexibility in querying is very important. Whatever the reports we provide are the queries we build out of search module: even an end-user can write custom reports using the query pattern of his own with the combination of filter and aggregation on several metric level. For example, Quality Metric report is “Filter” on time and “SumIt” by Quality Metric name and then put the two time-based reports together. This power is available for all reports we give. This simply means that advanced users of the analytics can build the report they need instead of we building for them every time. This flexibility requires tremendous effort on designing data structure and query. Having flexible and robust query on top of primary records is important part of whole design process: We have to be wise enough in designing data structure so that we can serve more and more reports out of single metric and, at the same time, try to reduce the number of queries per report. With just primary records as storage, some complex reports require a long research on alternatives to make them fast enough. For example, in Utilization Metric we end up in a very long running query due to its storage structure and we had to change it seven times to different structure and yet we are studying alternatives to make it faster without any loss of flexibility in query.

Data Distribution

Member is a logical grouping of all records so that individual group is highly cohesive and has a lot less coupling with another member. This frees us from having too low-level metrics and also ensures that we get a good way of distribution: not much relationship across groups. This ensures the data safety feature of distribution allowing us to process a member independent of another. So, processing scalability is just a matter of not coupling multiple members together which we go to full extent by just calculating metrics only for member. The records generated from engine will either be member record or just source claims. Since only undistributed part is individual member, our limit on scaling is the number of records per member: a member will be processed into a single machine in most of the cases and cannot be distributed. This will hardly be a limitation as we will not ever reach a limit when single member's records required for a metric calculation will not fit into memory. Also, we try to limit the number of fields used in a metric, and a module receives only fields that are required for that module instead of whole data set. This approach saves us from hitting memory limitation while processing a member.

Conclusion

The determination to put all metrics into member record has given a flexibility to form complex, usable and live reports on the fly; scalable engine to process metrics independently. However, for complex reports it becomes almost impossible to form all reports into a single-query: those reports may be a little slower. So, we always work towards better structure of metrics to reach the target of single-query report for all reports we intend to serve.